The Infrastructure Race Behind AI's Next Act: Why SpaceX Buying xAI Signals a Fundamental Shift

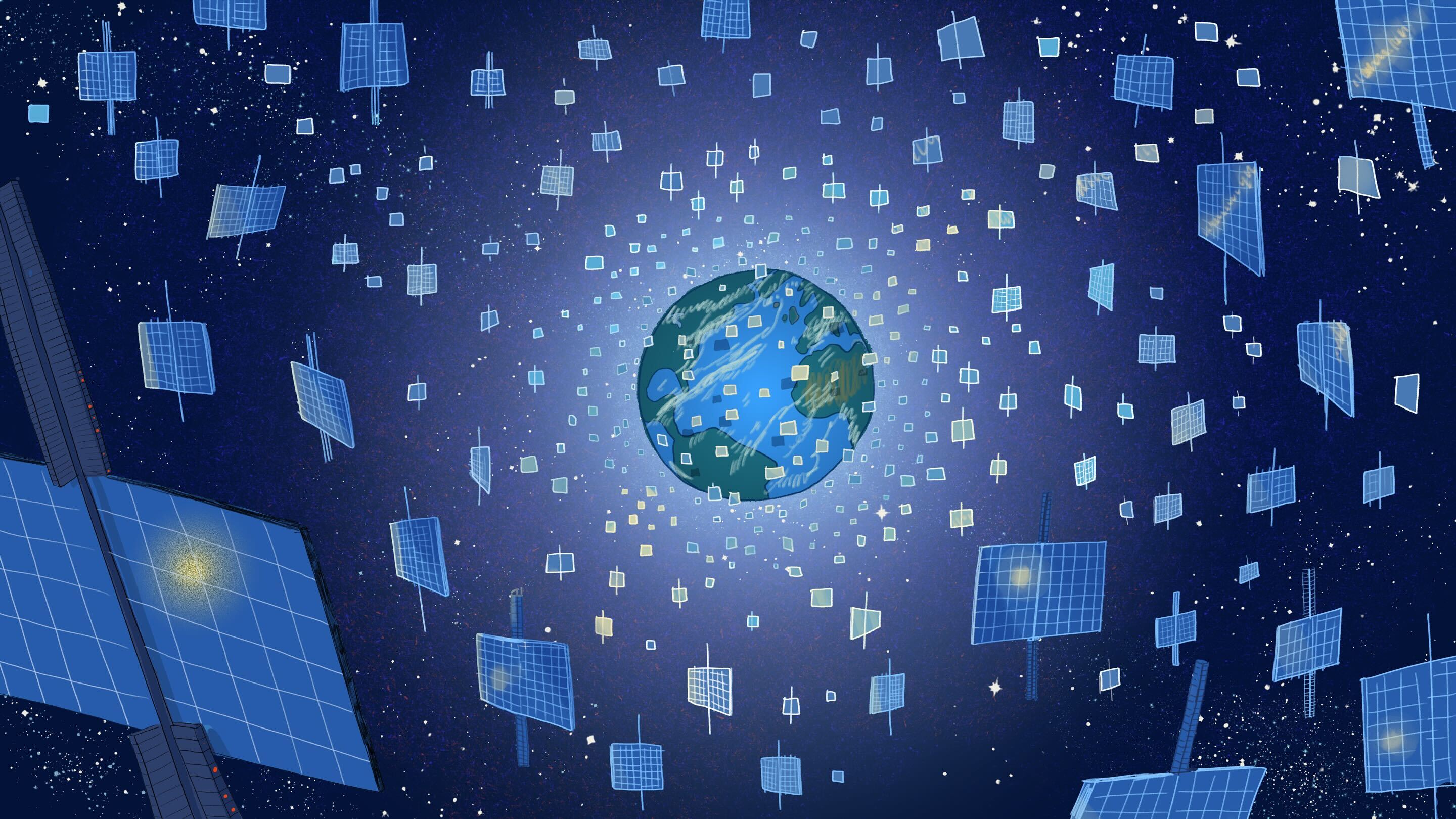

When SpaceX announced its acquisition of xAI this week, the tech press largely focused on Musk's corporate consolidation strategy. But buried in the announcement was a detail that should concern every AI company: the plan to build orbital data centers to handle AI's "massive computational demands." This isn't science fiction posturing—it's an acknowledgment that AI has an infrastructure problem that Earth-based solutions may not solve.

The timing is revealing. Just days earlier, we learned that aspiring Starlink competitor Logos Space Services secured FCC clearance to launch 4,000 satellites by 2035. Meanwhile, SpaceX itself reportedly filed to launch a million new satellites specifically for AI infrastructure. These aren't coincidences. They're symptoms of a bottleneck that the AI industry has been reluctant to discuss publicly: computational capacity is becoming the limiting factor for AI advancement, and traditional data center expansion can't keep pace.

Consider the evidence scattered across this week's headlines. Amazon is running one billion daily automated reasoning checks through its cvc5 tool across AWS services. OpenAI just launched Frontier, an enterprise platform designed to operationalize AI agents at scale. The company also expanded Codex with multi-agent capabilities that allow different AI models to collaborate on complex tasks. Each of these developments represents exponentially growing computational demand.

The infrastructure strain extends beyond computation. Valve pushed back its Steam Machine launch due to storage and memory shortages—a seemingly unrelated gaming news item that actually reflects the same supply chain pressures affecting AI hardware procurement. When gaming consoles and frontier AI labs are competing for the same scarce components, something has to give.

What makes the SpaceX-xAI merger particularly significant is that it represents vertical integration as a competitive strategy. Musk is essentially admitting that relying on third-party infrastructure providers creates unacceptable constraints for AI development. If you can't get enough compute capacity from AWS, Google Cloud, or Microsoft Azure, you build your own satellites and launch them yourself.

This approach may sound extreme, but it's not without precedent or logic. Space-based data centers offer several theoretical advantages: virtually unlimited solar power, superior cooling in the vacuum of space, reduced latency for global services, and freedom from terrestrial regulatory constraints around energy consumption. Whether these advantages actually materialize remains to be seen, but the fact that serious companies are exploring orbital computing suggests the desperation for solutions.

The implications for the broader AI industry are profound. If infrastructure access becomes the primary competitive moat, we could see AI development increasingly concentrated among the few organizations capable of building or acquiring their own computational resources at scale. Academic institutions and startups would be left dependent on whatever capacity the giants choose to provide—or price them out entirely.

Interestingly, Amazon seems to recognize this dynamic. Their Nova AI Challenge is giving university teams access to Nova Forge with "tools and computational resources previously unavailable to academic research programs." It's a generous gesture, but also an implicit admission that computational access is now the currency that determines who gets to participate in AI research.

The robotics sector offers an interesting counterpoint. Carbon Robotics' announcement of its Large Plant Model—trained on 150 million labeled images—demonstrates that edge computing and specialized models can achieve remarkable results without requiring orbital data centers. Their autonomous laser weeding robots now identify new weed species in real-time without cloud connectivity or retraining. It's a reminder that clever engineering and domain-specific solutions can sometimes outperform brute-force computational approaches.

As we watch SpaceX file satellite permits and companies scramble for computational resources, we're witnessing more than a technical challenge. We're seeing the infrastructure requirements of AI fundamentally reshape corporate strategy, international competition, and access to innovation. The question isn't whether AI needs better infrastructure—that much is clear. The question is whether that infrastructure will be accessible enough to preserve the open, collaborative innovation that brought us this far, or whether we're entering an era where only vertically-integrated giants can afford to play.