We're Teaching Robots With the Wrong Data

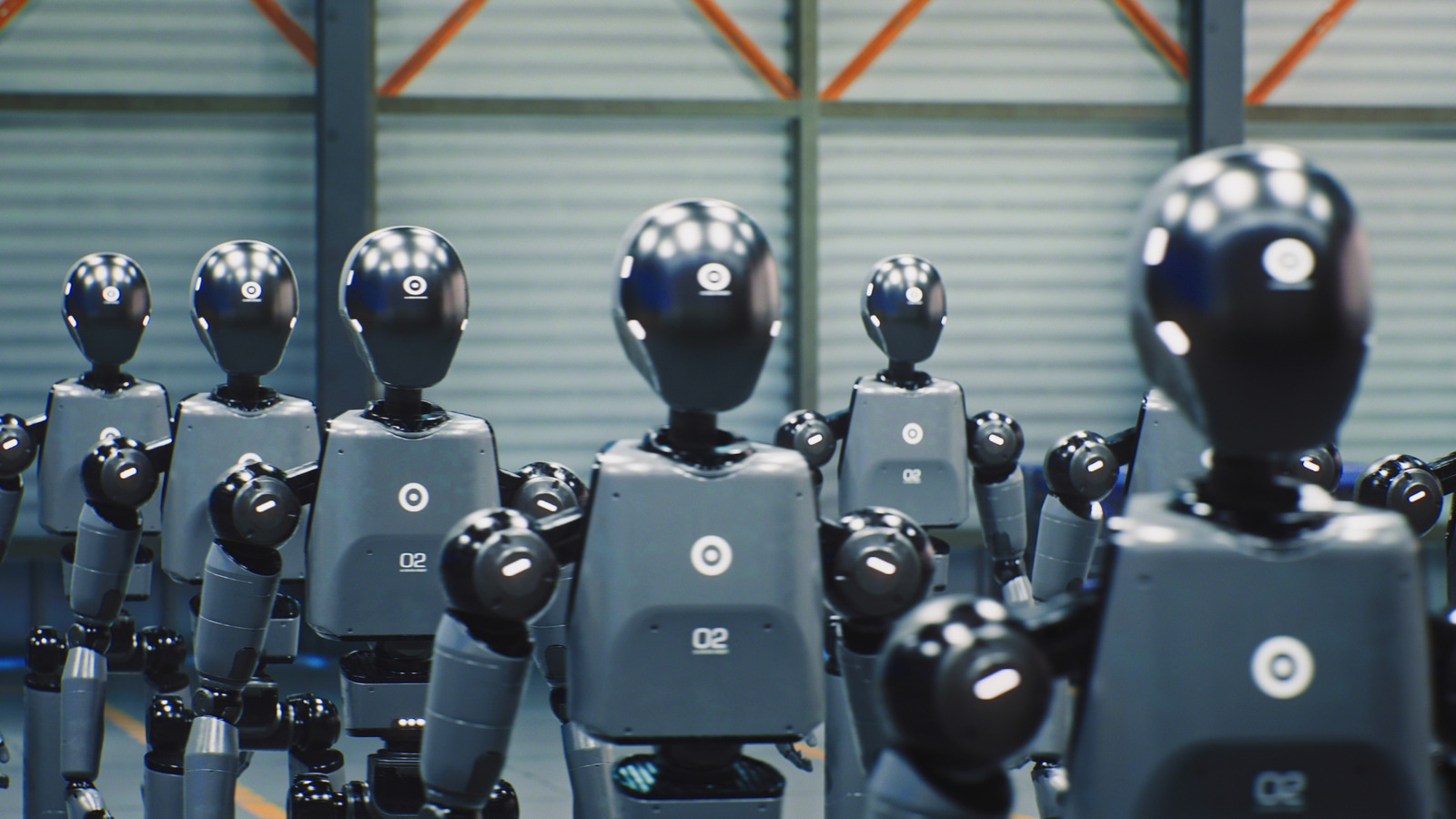

There's a peculiar cottage industry emerging in robotics right now. Companies are paying regular people—not engineers, not researchers, just anyone with a smartphone—to film themselves doing laundry, cooking dinner, or folding clothes. Others are offering remote control of robotic arms so operators can demonstrate basic manipulation tasks from their living rooms. The pitch is simple: help train the next generation of humanoid robots by showing them how humans move through the world.

It sounds wonderfully democratic. Crowdsourced robot training. AI learning from the masses instead of lab-coated specialists. But this data collection gold rush reveals something troubling about how the robotics industry thinks machines learn—and how far we still are from truly capable humanoid systems.

The fundamental issue is that movement data without context is nearly worthless for general-purpose robots. When you film yourself making a sandwich, the robot doesn't learn why you chose that particular knife, or how you adapted when the tomato was too ripe, or what you'd do if the bread was stale. It sees motion. Pixels changing. A sequence of joint angles and gripper positions. What it doesn't capture is the decision-making process, the sensory feedback, the countless micro-adjustments that make human manipulation so robust.

This becomes especially clear when we look at recent failures in AI comprehension. Research from Zhejiang University recently demonstrated that an AI model called Centaur, which was claimed to replicate human cognitive behavior, was actually just memorizing patterns. It knew answers but didn't understand questions. The same risk applies to movement data: robots can learn to replay motions without understanding the underlying task structure.

The manufacturing sector has already learned this lesson the hard way. Articles this week highlighted how deformable materials like fabric represent a critical test for physical AI precisely because you can't just replay recorded movements. Fabric behaves differently every time. The robot needs to understand material properties, not just motion sequences. Yet the crowdsourced training approach treats all tasks as if they're rigid-body manipulation problems that can be solved through imitation.

What makes this particularly concerning is the sheer scale of investment going into this flawed approach. The industry is collecting millions of hours of movement data while simultaneously struggling with basic comprehension problems. We're building enormous datasets of people opening doors, picking up objects, and walking up stairs—tasks that require understanding physics, material properties, and goal-directed behavior, not just motion replay.

The alternative isn't to abandon data collection. It's to fundamentally rethink what data we're collecting. Instead of just filming movements, we need systems that capture decision points, failure modes, and recovery strategies. We need data that includes why someone chose a particular approach, not just what movements they made. This might mean smaller datasets, more carefully curated, with human annotators explaining their reasoning at key moments.

Some researchers are already moving in this direction. Carnegie Mellon's Vision-Language-Navigation Challenge, launching its next phase this month, explicitly focuses on robots understanding natural language instructions in complex environments—pairing movement with meaning. That's the kind of data collection that might actually advance the field.

The current crowdsourced approach isn't just inefficient. It's training robots to be very good at mimicking surface-level behavior while remaining fundamentally brittle when faced with novel situations. We're teaching them to copy homework instead of understanding the subject. And no amount of homework copies will produce a robot that can truly adapt to the messy, unpredictable reality of human environments.