Your Robot Needs to Feel Things Before It Can Replace You

The robotics industry has a perception problem, and it's exactly backward. We obsess over humanoid form factors, billion-dollar compute infrastructure, and whether AI can pass increasingly obscure benchmarks. Meanwhile, the actual bottleneck keeping robots out of most factories has nothing to do with walking on two legs or understanding natural language.

It's that robots can't feel what they're touching.

XELA Robotics' recent enhancements to its uSkin sensor family—adding magnetic interference compensation and faster communication protocols—might not generate the breathless coverage that humanoid airport workers do. But tactile sensing represents the unglamorous foundation that every ambitious robotics deployment actually depends on. You can have the most sophisticated AI control system in the world, but if your gripper can't tell the difference between a successful grasp and a slip, you're running an expensive gamble on every pick.

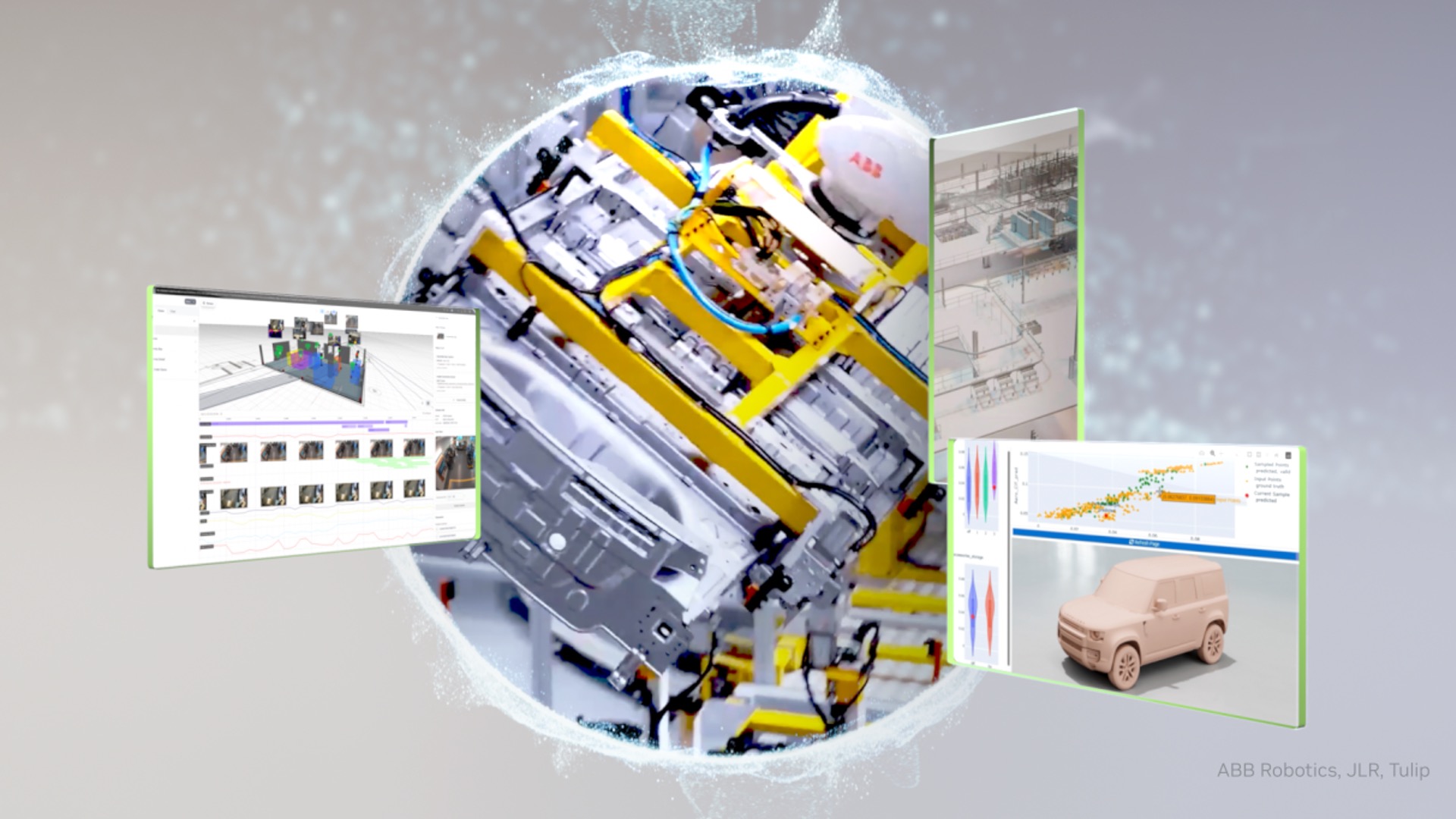

The timing of XELA's announcements alongside GFT Technologies' launch of inspection-plus-action robotic arms reveals where industrial automation is actually headed. It's not about replacing humans wholesale with bipedal androids. It's about giving existing robotic systems the sensory feedback they need to handle the messy, variable reality of manufacturing.

Consider what magnetic interference compensation actually enables: robots working with ferromagnetic materials without their sensors going haywire. That's not a minor detail—it's the difference between theoretical capability and deployable reliability in automotive, aerospace, and heavy manufacturing. Similarly, GFT's robots that can both detect and remove defective parts are only possible because they can reliably sense and manipulate objects with precision.

The parallel developments in simulation technology, like NVIDIA's Omniverse achieving 99% sim-to-real accuracy, make sense in this context. You can simulate robot motion planning and high-level decision-making effectively, but tactile interaction with real-world materials remains devilishly hard to model. Better sensors mean the sim-to-real gap narrows where it matters most.

What's striking is how little attention the sensor layer receives compared to the AI and actuator layers. Launchpad Build AI's Manufacturing Language Model promises to speed up automation design by 50%, but that acceleration is meaningless if the robots you're designing can't reliably manipulate the objects they're supposed to handle. Schaeffler's commitment to deploy 1,000 humanoids by 2032 sounds impressive until you remember that even humanoid robots need sophisticated tactile feedback to perform dexterous tasks—something current systems barely achieve.

The sensor companies aren't promising revolution or disruption. They're solving specific, unglamorous problems: reducing noise, increasing data throughput, compensating for electromagnetic interference. This is the plumbing work of robotics, and it's arguably more important than whether your robot looks human or can generate clever responses to prompts.

If industrial automation is going to scale beyond the controlled environments where it currently thrives, the breakthrough won't come from more impressive humanoid demos. It will come from robots that can feel a loose bolt, detect a misaligned part, or adjust grip pressure on the fly—the kind of sensory-motor integration that humans take completely for granted.

The irony is that we already know this. Every robotics researcher will tell you that manipulation is harder than mobility, and that sensing is the manipulation bottleneck. But the industry's marketing machine keeps selling the vision of humanoid workers while quietly scrambling to solve the touch problem that's been unsolved since the first industrial robot arm.

Maybe it's time we paid more attention to the sensors than the servos.